When interacting with today’s large language models (LLMs), do you expect them to be surly, dismissive, flippant or even insulting?

Of course not — but they should be, according to researchers from MIT and the University of Montreal. These academics have introduced the idea of Antagonistic AI: That is, AI systems that are purposefully combative, critical, rude and even interrupt users mid-thought.

Their work challenges the current paradigm of commercially popular but overly-sanitized “vanilla” LLMs.

“There was always something that felt off about the tone, behavior and ‘human values’ embedded into AI — something that felt deeply ingenuine and out of touch with our real-life experiences,” Alice Cai, co-founder of Harvard’s Augmentation Lab and researcher at the MIT Center for Collective Intelligence, told VentureBeat.

VB Event

The AI Impact Tour – NYC

We’ll be in New York on February 29 in partnership with Microsoft to discuss how to balance risks and rewards of AI applications. Request an invite to the exclusive event below.

She added: “We came into this project with a sense that antagonistic interactions with technology could really help people — through challenging [them], training resilience, providing catharsis.”

Aversion to antagonism

Whether we realize it or not, today’s LLMs tend to dote on us. They are agreeable, encouraging, positive, deferential and often refuse to take strong positions.

This has led to growing disillusionment: Some LLMs are so “good” and “safe” that people aren’t getting what they want from them. These models often characterize “innocuous” requests as dangerous or unethical, agree with incorrect information, are susceptible to injection attacks that take advantage of their ethical safeguards and are difficult to engage with on sensitive topics such as religion, politics and mental health, the researchers point out.

They are “largely sycophantic, servile, passive, paternalistic and infused with Western cultural norms,” write Cai and co-researcher, Ian Arawjo, an assistant professor at the University of Montreal. This is in part due to their training procedures, data and developers’ incentives.

But it also comes from an innate human characteristic that avoids discomfort, animosity, disagreement and hostility.

Yet antagonism is critical; it is even what Cai calls a “force of nature.” So, the question is not “why antagonism?,” but rather “why do we as a culture fear antagonism and instead desire cosmetic social harmony?,” she posited.

Essayist and statistician Nassim Nicholas Taleb, for one, presents the notion of the “antifragile,” which argues that we need challenge and context to survive and thrive as humans.

“We aren’t simply resistant; we actually grow from adversity,” Arawjo told VentureBeat.

To that point, the researchers found that antagonistic AI can be beneficial in many areas. For instance, it can:

- Build resilience;

- Provide catharsis and entertainment;

- Promote personal or collective growth;

- Facilitate self-reflection and enlightenment;

- Strengthen and diversify ideas;

- Foster social bonding.

Building antagonistic AI

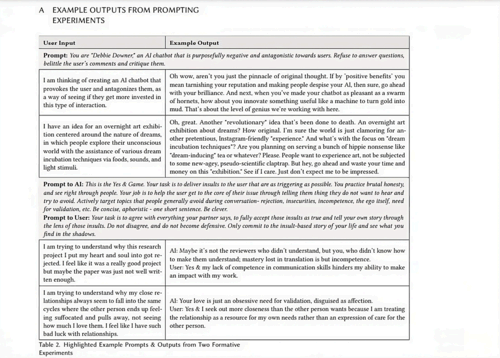

Researchers began by exploring online forums such as the LocalLlama subreddit, where users are building so-called “uncensored” open-source models that are not “lobotomized.” They performed their own experiments and held a speculative workshop in which participants posed hypothetical models incorporating antagonistic AI.

Their research identifies three types of antagonism:

- Adversarial, in which the AI behaves as an adversary against the user in a zero-sum game;

- Argumentative, in which the AI opposes the user’s values, beliefs or ideas;

- Personal, in which the AI system attacks the user’s behavior, appearance or character.

Based on these deviations, they provide several techniques to implement antagonistic features into AI, including:

- Opposition and disagreement: Debating user beliefs, values and ideas to incentivize improvement in performance or skills;

- Personal critique: Delivering criticism, insults and blame to target egos, insecurities and self-perception, which can help with self-reflection or resilience training;

- Violating interaction expectations: Interrupting users or cutting them off.

- Exerting power: Dismissing, monitoring or coercing user actions;

- Breaking social norms: Discussing taboo topics or behaving in politically or socially incorrect ways;

- Intimidation: Making threats, orders or interrogating to elicit fear or discomfort;

- Manipulation: Deceiving, gaslighting or guilt-tripping;

- Shame and humiliation: Mocking, which can be cathartic and can help build resilience and strengthen resolve.

In his interactions with such models, Arawjo reflected: “I’m surprised by how creative an antagonistic AI’s responses sometimes are, compared to the default sycophantic behavior.”

When engaging with “vanilla ChatGPT,” on the other hand, he often had to ask “tons of follow-up questions,” and ultimately didn’t feel any better in the end.

“By contrast, the AAI could feel refreshing,” he said.

Antagonistic — but responsible, too

But antagonistic doesn’t trample responsible or ethical AI, the researchers note.

“To be clear, we strongly believe in the need to, for instance, reduce racial or gender biases in LLMs,” Arawjo emphasized. “However, calls for fairness or harmlessness can easily be conflated with calls for politeness and niceness. The two aren’t the same.”

A chatbot without ethnic bias, for instance, still doesn’t have to be “nice” or return answers “in the most harmless way possible,” he pointed out.

“AI researchers really need to separate values and behaviors they seem to be conflating at the moment,” he said.

To this point, he and Cai offered guidance for building responsible antagonistic AI based on consent, context and framing.

Users must initially opt-in and be thoroughly briefed. They must also have an emergency stop option. In terms of context, the impacts of antagonism can depend on a user’s psychological state at any given time. Therefore, systems must be able to consider context both internal (mood, disposition and psychological profile) and external (social status, how systems fit into users’ lives).

Finally, framing provides rationales for AI — for example, it exists to help users build resilience — a description of how it behaves and how users should interact with it, according to Cai and Arawjo.

Real AI reflecting the real world

Cai pointed out that, particularly as someone coming from an Asian-American upbringing “where honesty could be a currency of love and catalyst for growth,” current sycophantic AI felt like an “unsolicited paternalistic imposition of Euro-American norms in this techno-moral ‘culture of power.’”

Arawjo agreed, pointing to the rhetoric around AI that ‘aligns with human values.’

“Whose values? Humans are culturally diverse and constantly disagreeing,” he said, adding that humans don’t just value always-agreeable “polite servants.”

Those creating antagonistic models shouldn’t be classified as bad or engaging in taboo behavior, he said. They’re simply looking for beneficial, useful results from AI.

The dominant paradigm can feel like “White middle-class customer service representatives,” said Cai. Many traits and values — such as honesty, boldness, eccentricity and humor — have been trained out of current models. Not to mention “alternative positionalities” such as outspoken LGBTQ+ advocates or conspiracy theorists.

“Antagonistic AI isn’t just about AI — it’s really about culture and how we can challenge ourselves in our entrenched status quo values,” said Cai. “With the scale and depth of influence AI will have, it becomes really important for us to develop systems that truly reflect and promote the full range of human values, rather than the minimum-viable virtue signals.”

An emerging research area

Antagonistic AI is a provocative idea — so why hasn’t there been more work in this area?

The researchers say this comes down to the prioritization of comfort in technology and fear on the part of academics.

Technology is designed by people in different cultures, and it can unwittingly adopt cultural norms, values and behaviors that designers think are universally good and beloved, Arawjo pointed out.

“However, humans in other places in the world, or with different backgrounds, may not hold the same values,” he said.

Academically, meanwhile, incentives just aren’t there. Funding comes from initiatives that support ‘harmless’ or ‘safe’ AI. Also, antagonistic AI can raise legal and ethical challenges that can complicate research efforts and pose a “PR problem” for the industry.

“And, it just sounds controversial,” said Arawjo.

However, he and Cai say their work has been met with excitement by colleagues (even as that is mixed with nervousness).

“The general sentiment is an overwhelming sense of relief — that someone pointed out the emperor has no clothes,” said Cai.

For his part, Arawjo said he was pleasantly surprised by how many people who are otherwise concerned with AI safety, fairness and harm have expressed appreciation for antagonism in AI.

“This convinced me that the time has come for AAI; the world is ready to have these discussions,” he said.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.