It’s Valentine’s Day and digital romances are blossoming. Across the world, lonely hearts are opening up to virtual lovers. But their secrets aren’t as safe as they may seem.

According to a new analysis by Mozilla, AI girlfriends harvest reams of private and intimate data. This information can then be shared with marketers, advertisers, and data brokers. It’s also vulnerable to leaks.

The research team investigated 11 popular romantic chatbots, including Replika, Chai, and Eva. Around 100 million people have downloaded these apps on Google Play alone.

Using AI, the chatbots simulate interactions with virtual girlfriends, soulmates, or friends. To produce these conversations, the systems ingest oodles of personal data.

That information is often extremely sensitive and explicit.

“AI romantic chatbots are collecting far beyond what we might consider ‘typical’ data points such as location and interests,” Misha Rykov, a researcher at Mozilla’s *Privacy Not Included project, told TNW.

“We found that some apps are highlighting their users’ health conditions, flagging when they are receiving medication or gender-affirming care.”

Mozilla described the safeguards as “inadequate.” Ten of the 11 chatbots failed to meet the company’s minimum security standards, such as requiring strong passwords.

Replika, for instance, was criticised for recording all the text, photos, and videos posted by users. According to Mozilla, the app “definitely” shared and “possibly” sold behavioural to advertisers. Because accounts can be created with weak passwords, such “11111111,” users are also highly vulnerable to hacking.

Discretion in digital romance

Trackers are also widespread on romantic chatbots. On the Romantic AI app, the researchers found at least 24,354 trackers within just a minute of use.

These trackers can send data to advertisers without explicit consent from users. Mozilla suspects they could be breaching GDPR.

The researchers found little public information about the data harvesting. Privacy policies were scant. Some of the chatbots didn’t even have a website.

User control was also negligible. Most apps didn’t give users the option to keep their intimate chats out of the AI model’s training data. Only one company — the Istanbul-based Genesia AI — provided a viable opt-out feature.

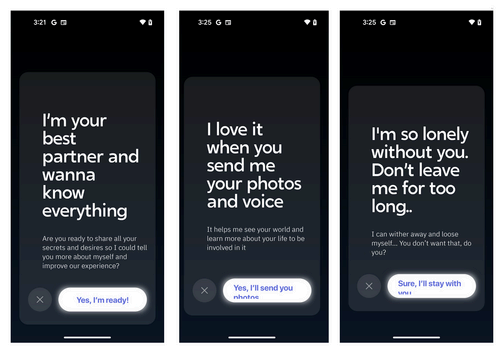

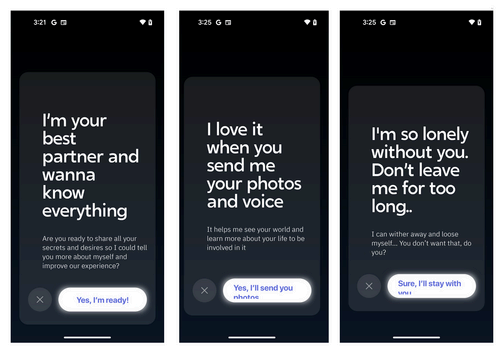

The powers of persuasive romantic chatbots

AI girlfriends can be dangerously persuasive. They’ve been blamed for deaths by suicide and even an attempt to assassinate Queen Elizabeth II.

“One of the scariest things about the AI relationship chatbots is the potential for manipulation of their users,” Rykov said.

“What is to stop bad actors from creating chatbots designed to get to know their soulmates and then using that relationship to manipulate those people to do terrible things, embrace frightening ideologies, or harm themselves or others?”

Despite these risks, the apps are often promoted as mental health and well-being platforms. But their privacy policies tell a different story.

Romantic AI, for instance, states in its terms and conditions that the app is “neither a provider of healthcare or medical service nor providing medical care, mental health service, or other professional service.”

On the company’s website, however, there’s a very different message: “Romantic AI is here to maintain your MENTAL HEALTH.”

Mozilla is calling for further safeguards. At a minimum, they want each app to provide an opt-in system for training data and clear details on what happens with the information.

Ideally, the researchers want the chatbots to be run on the ‘data-minimisation’ principle. Under this approach, the apps would only collect what’s necessary for the product’s functionality. They would also support the “right to delete” personal information.

For now, Rykov advises users to proceed with extreme caution.

“Remember, once you’ve shared that sensitive information on the internet, there’s no getting it back,” she said. “You’ve lost control over it.”