prill

As its name suggests Rambus (NASDAQ:RMBS) is a semiconductor company that produces interface (or bus) chipsets or those located between the memory modules and the processors used in servers. It also develops the technology that some manufacturers use to build high bandwidth memory for bundling with Nvidia’s (NASDAQ:NVDA) GPU accelerators which explains why its share price has surged since September 2022.

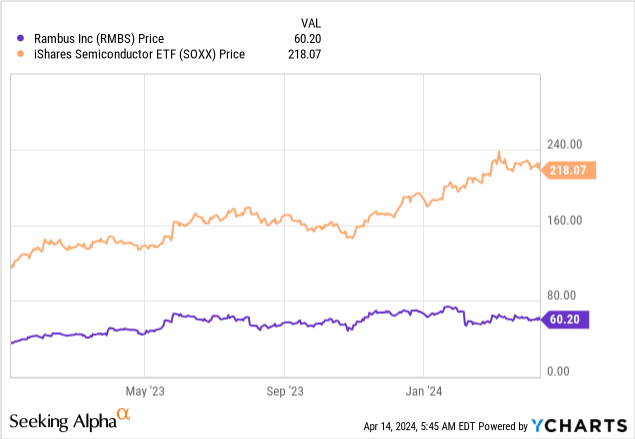

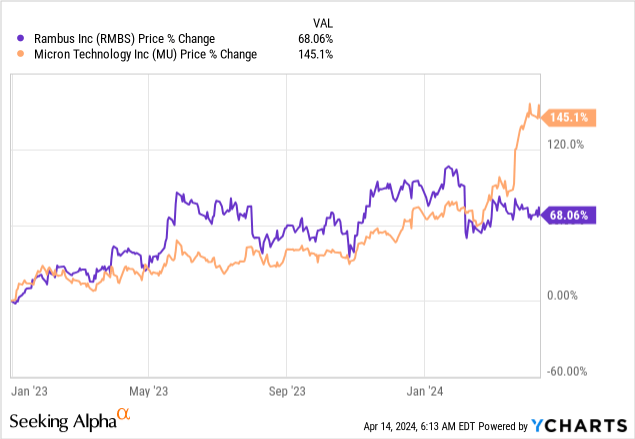

As such, its trailing price-to-cash flow of 33.14x trades at nearly two times its five-year average fueled mostly by the enthusiasm around AI, but since the beginning of 2024 as per the chart below, its share price has underperformed the iShares Semiconductor ETF (SOXX).

The objective of this thesis is to show that this retrenchment is not a buying opportunity because of the competition for AI-enabling high-speed memory chips. At the same time, it is important to adopt a dose of realism as this remains an uncertain economic environment where interest rates remain elevated while inflation remains relatively high at 3.5%. This puts pressure on demand for non-AI components used in data centers.

I start by detailing how Rambus’ products make their way into the world’s largest data centers.

Rambus IP Drives SK Hynix and Samsung HBMs

First, having the right interface chips is crucial for the rapid exchange of data between the processor and memory chips within servers, irrespective of whether it is the commonly used DDR4 or the latest DDR5 DRAMs (or dynamic Random access memory) manufactured by Samsung (OTCPK:OTCPK:SSNLF) or SK Hynix. In this respect, Rambus has developed the technology that allows for more information to be pushed through, and, at increasingly fast speeds.

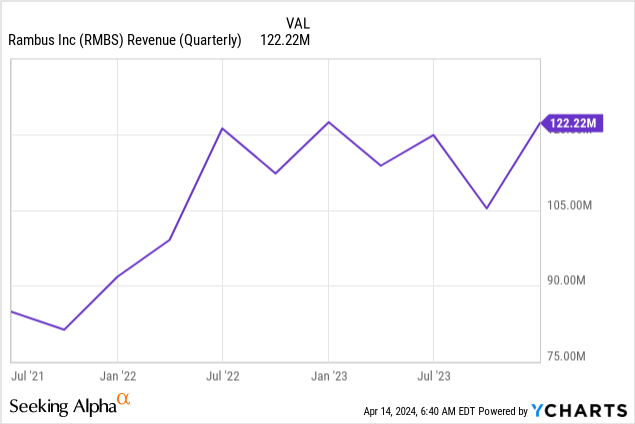

Looking at AI, some will remember that SK Hynix was able to rapidly scale the manufacturing of High Bandwidth Memory or HBM chips in the second half of 2022. These are the chips that were initially paired with Nvidia’s H100 accelerator GPUs by OEMs and hyperscalers to build the AI infrastructures to support ChatGPT-like applications. Thus, Rambus, which sells its intellectual property, or IP, to the South Korean company, saw its quarterly sales rapidly surge in 2022 as shown in the chart below. Subsequently, sales remained more or less subdued before surging in the fourth quarter of 2023 (Q4), explained by Nvidia’s diversifying its supply chain to include Samsung which also procures IP from Rambus.

Now, with its IP driving the HBMs produced by these large East Asian memory manufacturers and Nvidia consistently beating topline estimates and raising revenue guidance, you would have expected Rambus to continue benefiting from higher demand and the above chart to continue trending higher. However, the price action in the introductory chart tends to indicate that investors expect something else, namely that Rambus may not continue to be an indirect beneficiary of Generative AI with its IP portfolio, in the same way as before.

This may be due to the emergence of a new competitor.

Enter Competitor Micron

After being late to the Gen AI party, Micron (MU), the giant American memory chips manufacturer joined in a big way in February 2024, with its HBM3e chips boasting 24GB of memory proving more powerful than the HBM manufactured by the South Korean suppliers I talked about earlier. It started mass production of the HBM3e after securing an order from Nvidia for the H200 AI GPU, which is much more advanced than the H100.

Digging deeper, according to a comparison table by Trend Force, Samsung, and SK Hynix also have HBM3e of the same specification or 24 GB with speeds varying from 8 Gbps to 9.2 Gbps. Still, it is Micron that appears to have been the first to send samples to Nvidia for qualification purposes. Additionally, Micron claims that its high bandwidth memory consumes about 30% less power than its competitors, a solid argument given the energy-hungry nature of GPUs compared to conventional CPUs. In this way, Micron’s chips can enable better efficiency and reduce data center operating costs.

Now, the problem is that Micron does not source IP for manufacturing its HBMs from Rambus as it uses its proprietary 1-beta technology for the purpose. For investors, as per my investigation into Rambus’ earnings transcripts, where the names of the two South Korean suppliers are repeatedly mentioned, Micron does not appear to source HBM-related IP from it. Instead, as per the latest SEC filings and Micron’s corporate website, it does use Rambus’ IP but only for DRAM-related interface chips, namely for DDR5.

Further evidence of Rambus not benefiting and instead being disadvantaged by Micron’s ability to have rapidly commercialized its HBM3e can be obtained from the price action which shows that its shares started underperforming as of February this year which coincided with the U.S. memory maker’s shares surging as shown in the chart below.

Thus, investors’ sentiment turning bullish toward Micron after its Nvidia contract, was conversely detrimental to Rambus’ stock, and competition may be the reason for analysts downgrading revenue projections for the longer term or the full year 2024 which will end in December this year by about $29 million.

Lower IP Revenue and Risks to the non-AI Business

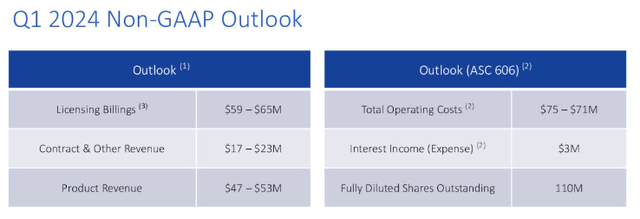

Going into details, one of the reasons for the downgrade is due to lower revenue from royalties which includes patent and technology licenses and related renewals. Thus, Licensing Billings for the first quarter of 2024 is expected to be $62 million or the midpoint of $59 million and $65 million as per the chart below. This is down from the $66.2 million in Q4.

Company presentation (seekingalpha.com)

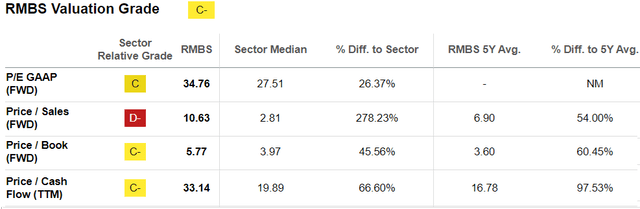

Now, the sequential decline in Licensing Billings is not substantial as there can be a lag between the time a customer is billed and the revenue accrues to the income statement. Moreover, Samsung, Rambus’ client for high bandwidth memory IPs should release a 36 GB chip which is superior to Micron’s in terms of capacity in the second half of this year. This could represent additional sales for Rambus but for a stock that already trades at 10.63 times forward sales, relative to the sector median of 2.81x, investors seem to have already priced in the opportunities.

Valuation Metrics (seekingalpha.com)

Along the same lines, now that Micron has penetrated the HBM market things may prove more difficult for its competitors.

Moreover, a look at non-AI (traditional) demand for memory chips reveals that there is no reason to be optimistic. In this respect, the Semiconductor Industry Association (SIA) forecasts that there will be a rebound in sales by 13.1% this year on the back of AI and memory following an 8.2% slump in 2023. However, to be realistic, since a lot of the investment is currently around AI in an economic climate where borrowing costs remain elevated and potentially limit the spending power of businesses, forthcoming data center upgrade cycles may be impacted.

Rambus is More of a Hold

This idea of capital being redirected to AI resulting in a decline in demand for traditional server components was confirmed by Rambus management during Q4’s earnings call. This has also resulted in inventory declining at a slower pace than before, especially for DDR4 which is an older version of DRAM memory used in servers. Thus, as shown in Q1-2024’s outlook table above, the mid-point of the guidance for product revenue which primarily consists of memory interface chips is $50 million which represents a sequential decline from the $53.7 million obtained in Q4.

In these conditions, it is preferable to avoid buying the stock.

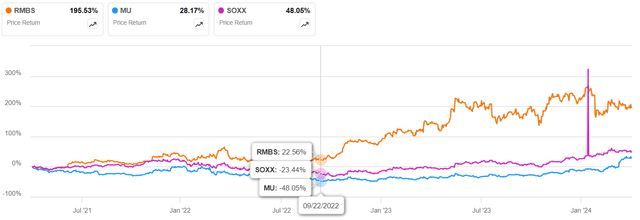

To support my point of view, the chart below shows that its 3-year upside of 195% started around September 2022 after Nvidia started mass-producing its H100s. Now, one can argue that if Nvidia chooses Samsung’s 36GB HBM3e memory for its B100 which will succeed the H200, the stock could rise again, but the fact remains that it has lost its monopoly status in HBM IPs.

Performance charts (seekingalpha.com)

Moreover, Micron is not lying idle as it is working on its own 36GB HBM3E. Therefore, after its meteoric rise, one should expect volatility for Rambus’ stock, especially in a period when more market participants are expecting the U.S. Central Bank to proceed to fewer rate cuts than initially planned. For investors, any delay in cutting rates may be detrimental to equities in general especially after expectations of rate cuts contributed to the market reaching new heights in 2023.

Finally, investors will note that I am not bearish. The reason is that the company has a large inventory of DDR5 chipsets which could indirectly benefit from the AI revolution as customers demand more performant servers with the latest processors to process data for traditional flavors of artificial intelligence that do not necessarily require Nvidia advanced GPUs and HBMs. For this purpose, Rambus has developed the latest 4th Generation DDR5 RCD or memory chipsets which deliver a memory performance of about 50% more than available previously.