When sexually explicit deepfakes of Taylor Swift went viral on X (formerly known as Twitter), millions of her fans came together to bury the AI images with “Protect Taylor Swift” posts. The move worked, but it could not stop the news from hitting every major outlet. In the subsequent days, a full-blown conversation about the harms of deepfakes was underway, with White House press secretary Karine Jean-Pierre calling for legislation to protect people from harmful AI content.

But here’s the deal: while the incident involving Swift was nothing short of alarming, it is not the first case of AI-generated content harming the reputation of a celebrity. There have been several instances of famous celebs and influencers being targeted by deepfakes over the last few years – and it’s only going to get worse with time.

“With a short video of yourself, you can today create a new video where the dialogue is driven by a script – it’s fun if you want to clone yourself, but the downside is that someone else can just as easily create a video of you spreading disinformation and potentially inflict reputational harm,” Nicos Vekiarides, CEO of Attestiv, a company building tools for validation of photos and videos, told VentureBeat.

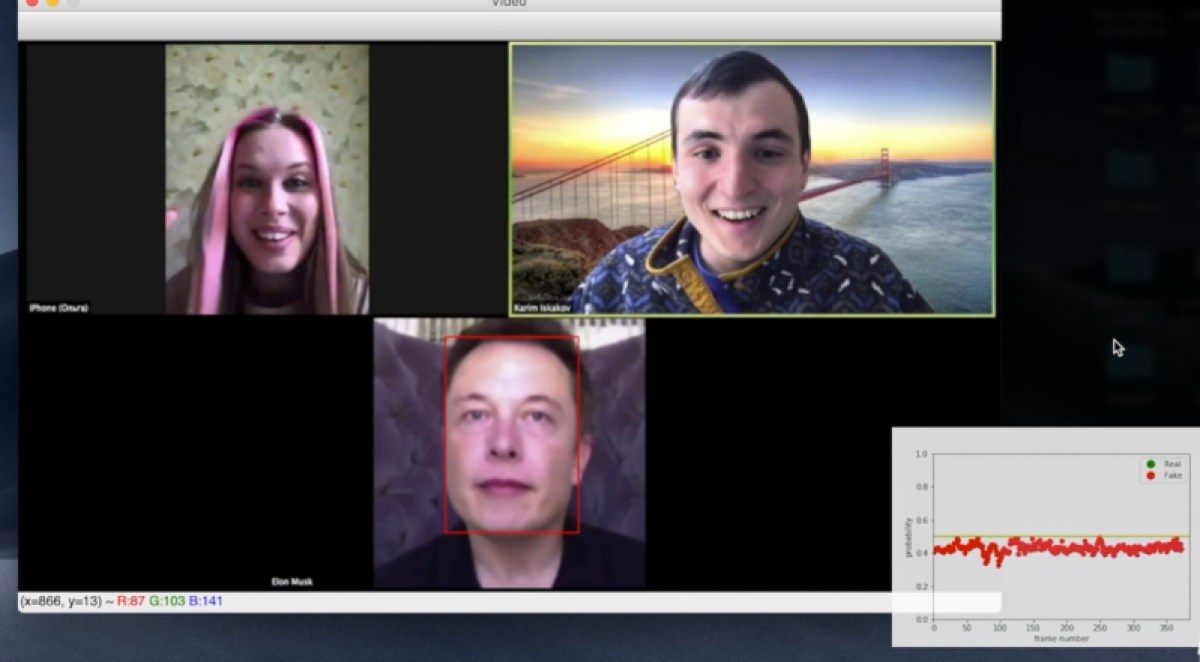

As AI tools capable of creating deepfake content continue to proliferate and become more advanced, the internet is going to be abuzz with misleading images and videos. This begs the question: how can people identify what’s real and what’s not?

VB Event

The AI Impact Tour – NYC

Weâll be in New York on February 29 in partnership with Microsoft to discuss how to balance risks and rewards of AI applications. Request an invite to the exclusive event below.

Understanding deepfakes and their wide-ranging harm

A deepfake can be described as the artificial image/video/audio of any individual created with the help of deep learning technology. Such content has been around for several years, but it started making headlines in late 2017 when a Reddit user named ‘deepfake’ started sharing AI-generated pornographic images and videos.

Initially, these deepfakes largely revolved around face swapping, where the likeness of one person was superimposed on existing videos and images. This took a lot of processing power and specialized knowledge to make. However, over the past year or so, the rise and spread of text-based generative AI technology has given every individual the ability to create nearly realistic manipulated content – portraying actors and politicians in unexpected ways to mislead internet users.

“It’s safe to say that deepfakes are no longer the realm of graphic artists or hackers. Creating deepfakes has become incredibly easy with generative AI text-to-photo frameworks like DALL-E, Midjourney, Adobe Firefly and Stable Diffusion, which require little to no artistic or technical expertise. Similarly, deepfake video frameworks are taking a similar approach with text-to-video such as Runway, Pictory, Invideo, Tavus, etc,” Vekiarides explained.

While most of these AI tools have guardrails to block potentially dangerous prompts or those involving famed people, malicious actors often figure out ways or loopholes to bypass them. When investigating the Taylor Swift incident, independent tech news outlet 404 Media found the explicit images were generated by exploiting gaps (which are now fixed) in Microsoft’s AI tools. Similarly, Midjourney was used to create AI images of Pope Francis in a puffer jacket and AI voice platform ElevenLabs was tapped for the controversial Joe Biden robocall.

This kind of accessibility can have far-reaching consequences, right from ruining the reputation of public figures and misleading voters ahead of elections to tricking unsuspecting people into unimaginable financial fraud or bypassing verification systems set by organizations.

“We’ve been investigating this trend for some time and have uncovered an increase in what we call ‘cheapfakes’ which is where a scammer takes some real video footage, usually from a credible source like a news outlet, and combines it with AI-generated and fake audio in the same voice of the celebrity or public figure… Cloned likenesses of celebrities like Taylor Swift make attractive lures for these scams since they’re popularity makes them household names around the globe,” Steve Grobman, CTO of internet security company McAfee, told VentureBeat.

According to Sumsub’s Identity Fraud report, just in 2023, there was a ten-fold increase in the number of deepfakes detected globally across all industries, with crypto facing the majority of incidents at 88%. This was followed by fintech at 8%.

People are concerned

Given the meteoric rise of AI generators and face swap tools, combined with the global reach of social media platforms, people have expressed concerns over being misled by deepfakes. In McAfee’s 2023 Deepfakes survey, 84% of Americans raised concerns about how deepfakes will be exploited in 2024, with more than one-third saying they or someone they know have seen or experienced a deepfake scam.

What’s even worrying here is the fact that the technology powering malicious images, audio and video is still maturing. As it grows better, its abuse will be more sophisticated.

“The integration of artificial intelligence has reached a point where distinguishing between authentic and manipulated content has become a formidable challenge for the average person. This poses a significant risk to businesses, as both individuals and diverse organizations are now vulnerable to falling victim to deepfake scams. In essence, the rise of deepfakes reflects a broader trend in which technological advancements, once heralded for their positive impact, are now… posing threats to the integrity of information and the security of businesses and individuals alike,” Pavel Goldman-Kalaydin, head of AI & ML at Sumsub, told VentureBeat.

How to detect deepfakes

As governments continue to do their part to prevent and combat deepfake content, one thing is clear: what we’re seeing now is going to grow multifold – because the development of AI is not going to slow down. This makes it very critical for the general public to know how to distinguish between what’s real and what’s not.

All the experts who spoke with VentureBeat on the subject converged on two key approaches to deepfake detection: analyzing the content for tiny anomalies and double-checking the authenticity of the source.

Currently, AI-generated images are almost realistic (Australian National University found that people now find AI-generated white faces more real than human faces), while AI videos are in the way of getting there. However, in both cases, there might be some inconsistencies that might give away that the content is AI-produced.

“If any of the following features are detected — unnatural hand or lips movement, artificial background, uneven movement, changes in lighting, differences in skin tones, unusual blinking patterns, poor synchronization of lip movements with speech, or digital artifacts — the content is likely generated,” Goldman-Kalaydin said when describing anomalies in AI videos.

For photos, Vekiarides from Attestiv recommended looking for missing shadows and inconsistent details among objects, including a poor rendering of human features, particularly hands/fingers and teeth among others. Matthieu Rouif, CEO and co-founder of Photoroom, also reiterated the same artifacts while noting that AI images also tend to have a greater degree of symmetry than human faces.

So, if a person’s face in an image looks too good to be true, it is likely to be AI-generated. On the other hand, if there has been a face-swap, one might have some sort of blending of facial features.

But, again, these methods only work in the present. When the technology matures, there’s a good chance that these visual gaps will become impossible to find with the naked eye. This is where the second step of staying vigilant comes in.

According to Rauif, whenever a questionable image/video comes to the feed, the user should approach it with a dose of skepticism – considering the source of the content, their potential biases and incentives for creating the content.

“All videos should be considered in the context of its intent. An example of a red flag that may indicate a scam is soliciting a buyer to use non-traditional forms of payment, such as cryptocurrency, for a deal that seems too good to be true. We encourage people to question and verify the source of videos and be wary of any endorsements or advertising, especially when being asked to part with personal information or money,” said Grobman from McAfee.

To further aid the verification efforts, technology providers must move to build sophisticated detection technologies. Some mainstream players, including Google and ElevenLabs, have already started exploring this area with technologies to detect whether a piece of content is real or generated from their respective AI tools. McAfee has also launched a project to flag AI-generated audio.

“This technology uses a combination of AI-powered contextual, behavioral, and categorical detection models to identify whether the audio in a video is likely AI-generated. With a 90% accuracy rate currently, we can detect and protect against AI content that has been created for malicious ‘cheapfakes’ or deepfakes, providing unmatched protection capabilities to consumers,” Grobman explained.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.